Bridging the Gap: How GeoAI enables better decision-making

Artificial intelligence isn’t on its way; it’s already here.

AI already has many applications, from Instagram filters to driverless vehicles. Now imagine combining AI with remote sensing technology. When applied to satellite images and drone footage, AI can take strategic decision-making to new heights, enabling many organizations to make better investment decisions.

Take the Telecom Industry. Every year, telecom operators collectively invest billions of USD to roll out critical infrastructure. With that kind of money on the line, you want to be well-prepared when deciding where to roll out, which can be a long, tedious, and costly process. Expansion means surveying land, installing physical cables, and mapping out coverage areas — all of which require time, money, and people to do work on the ground. This process can take months or even years to finish.

What if you can skip many of these manual processes? With major improvements and reductions in cost for remote sensing, we can now collect critical data at granular levels and run sophisticated analytics at high speed and scale without ever setting foot outdoors. And all of this is made possible with the help of AI.

In this article, we will take a deep dive into some of the fundamental concepts behind geospatial artificial intelligence and machine learning.

Enter GeoAI

Satellite images have become a groundbreaking data source for environmental conservation, humanitarian efforts, and poverty alleviation projects. Building on the tremendous recent progress in the field of artificial intelligence, our team developed GeoAI: an artificial intelligence toolkit to deploy geospatial AI applications and solutions at scale. Applying cutting-edge machine learning on top of our comprehensive GeoData warehouse, we are able to provide a faster and more efficient way to execute geospatial data-driven solutions for companies and organizations everywhere.

Computer Vision and its practical applications

As previously mentioned, computers can now extract information from satellite and drone images using a technique called Computer Vision (CV). But how does it work? Imagine giving your computer a way to recognize things in images. If the camera is the computer’s eye, CV is the part of the brain responsible for “understanding” images. It mimics human vision and processes massive amounts of information using algorithms to identify patterns in images or videos.

Fig. 1 The four common CV tasks. Source

We will take you through the four most common CV tasks and share how these can be applied to satellite images.

I. Image Classification

Does the image above contain balloons?

The answer is simple: yes. To a human, this is very intuitive, but for computers, it has been a difficult question to answer for the longest time. Although CV has been around since the 1960s, recent advancements in deep learning have helped propel the field. This has allowed machines to distinguish between different types of images, a task known as Image Classification. Specifically, Image Classification allows a computer to assign a label or category to a given image.

If we apply the same idea to a satellite image, we can now assign all kinds of labels for satellite images based on what we need like land use and land cover, wealth classification, poverty estimation, school mapping, and informal settlement detection and have the AI identify the right label.

Fig 2. Examples of Image Classification via CV.

Source: EuroSAT Dataset (Helber, Patrick, et al. "Eurosat: A novel dataset and deep learning benchmark for land use and land cover classification." IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing 12.7 (2019): 2217-2226.)

Governments, corporations, and civic groups in developing countries can all benefit from Image Classification for far off locations without having to visit the places themselves, saving them time, labor, and funding in the process. Figure 2 shows the wide range of labels that can be used for categorization in CV. With a few clicks, Image Classification now allows organizations to survey hectares and hectares of land in seconds, a task which previously would have taken weeks to complete.

II. Object Detection

So the computer can classify images; how about asking it to find the specific location of objects? Let’s go back to telcos needing to expand operations in different locations. With available satellite imagery, Object Detection can be used to locate specific objects in any area, saving companies the need to physically survey on the ground.

In a recent project, we partnered with an industry leader in the Philippines to identify residential areas using Object Detection. We ran the task to detect where residential buildings are, taking into account house size or footprint, and roof characteristics to assess the socioeconomic status of an area.

We can also train an AI model to look for other distinguishable objects, such as vehicles, sports fields, oil tanks, and parking lots in aerial images. Let’s say you want to count the number of swimming pools in an area. Instead of having to manually go over Google Maps, you can use Object Detection to learn the characteristics of swimming pools (i.e. blue, rectangle) and accomplish the task in a matter of minutes.

Fig. 3 Images on the right show where swimming pools are located in the location provided.

Aside from companies planning for more data-driven rollout strategies, these tasks can be extremely helpful for urbanization rate evaluations, taxation forecasting, and public-health policy research.

III. Semantic Segmentation

Now, imagine the computer assigning labels to classify each pixel of an image. Let’s say you feed the algorithm images containing roads. Semantic segmentation can help you determine whether or not each pixel in the image belongs to a road. Using this technique, you can segment the entire image as “road” or “not road”.

Fig. 4 Using CV to identify roads. Source

We use this application in particular to extract road network data, but it can also be used for more granular land use and land cover (clutter) segmentation, such as agricultural mapping and water conservation efforts.

IV. Instance Segmentation

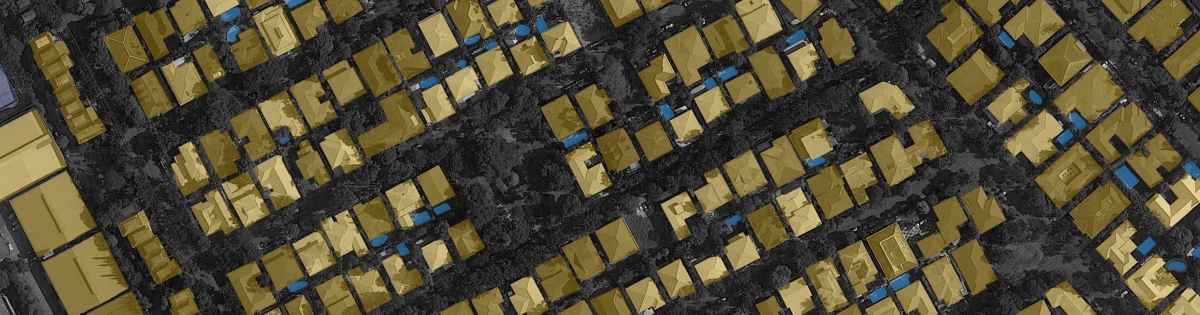

Lastly, Instance segmentation combines the benefits of both Object Detection and Semantic Segmentation in that it not only identifies the location of the object, but also the pixel mask of each particular object.

One practical use of Instance Segmentation applies in the architecture and construction industries, where basemaps and footprints of structures can be digitized, studied, and improved.

Fig. 5 Instance Segmentation as applied on a satellite image of a residential area.

This methodology allows researchers to use satellite imagery to identify areas and perimeters of specific buildings and residential spots, so we also use it to measure urban development. This information can be applied to very interesting use cases. For example, public sector organizations can use the output to determine the average affluence standing in an area, again saving time and money for field surveys.

Effective planning for efficient operations

With these fundamental concepts behind computer vision, we built GeoAI to provide companies and organizations with custom leading-edge solutions for strategic investment decisions. We know the multitude of challenges out there and depending on your sector, manual data collection and analysis could take weeks or even years to complete without providing the granularity that is needed to make informed decisions. Using AI, we developed tools that have revolutionized how our cross-industry clients collect, use, and analyze data. Whether in telco, utilities, construction, real estate, or the public sector, we’re excited to explore how geospatial AI can help make decision-making easier.

Think your organization could use computer vision or geospatial analytics? Schedule a consult here!

Thinking Machines is a technology consultancy building AI & data platforms to solve high-impact problems.

Apply now at https://thinkingmachin.es/careers and be part of a team that’s civic-minded, innovative, and result-oriented!