Harnessing the Potential of Claims Data To Detect Insurance Fraud

Automate the investigation of potential fraud, a previously manual and tedious process

Evaluate 3.2 million claims and use 61 variables on providers, location, and disease

Reduce the amount of potentially fraudulent claims identified using 5 machine learning models

We worked with a health insurance company in the US to develop a fraud detection model that would help them identify claims outliers for further investigation. Thinking Machines developed a tool to survey all pneumonia and cataract case data over a 3-year period.

Identifying Suspicious Claims in an Accurate and Scalable Way

Although our client had piecemealed different detection tools in the past, none had ever successfully identified a fraudulent case. “Even if a historical case had been identified, there’s no way we could scale what was essentially a manual and time-intensive process,” says one company project manager.

The task was compounded by the fact that fraudulent behavior widely varies by disease. For instance, cataract fraud is commonly done in volume (repeating unnecessary procedures) whereas pneumonia fraud is commonly done via upcasing (charging higher rates). This meant that no single solution existed that could fully handle the volume and variety of the client’s data.

The most salient barrier was more deeply-seated: The company did not possess a definitive dataset for fraudulent and non-fraudulent claims. Without such a training dataset, it would be exceedingly difficult to develop an algorithm that could output whether a claim is fraudulent with high certainty.

Using Outlier Probabilities to Kickstart the Creation of a Training Dataset

Given the constraints, our team resolved to create a tool that would employ unsupervised machine learning models to discover outliers within the healthcare data. Outliers are any observation that is numerically distant from most of the other samples and may be generated based on factors such as geographical area, incidence rates, or healthcare provider. Although imperfect, these outliers are a good starting point for possible fraud detection because they surface a manageable subset of claims that should undergo further investigation.

Data Preparation

We worked closely with the client’s IT team to combine diverse databases such as claims, provider, member, and medical audits data. Because the data owners apply slightly different terms to refer to the same fields, we cleaned and converted the data into a unified form. We also worked with subject matter experts to integrate external data from organizations such as the World Health Organization. The result: a robust data foundation to refine our algorithms.

Ensemble Predictive Modeling

Since our client needed to avoid the use of hard coded rules, our outlier model was based on unsupervised machine learning algorithms. We combined 5 unsupervised techniques that automatically computed boundaries between normal and outlying claims. Through ensemble methods, we reduced outlier variance among the various algorithms and assigned a weighted effect score relative to each claim’s features (disease-related, hospital-related, patient-related). The higher the effect score, the more likely its corresponding feature might lead out to actual fraud.

Visualization for Outlier Investigation

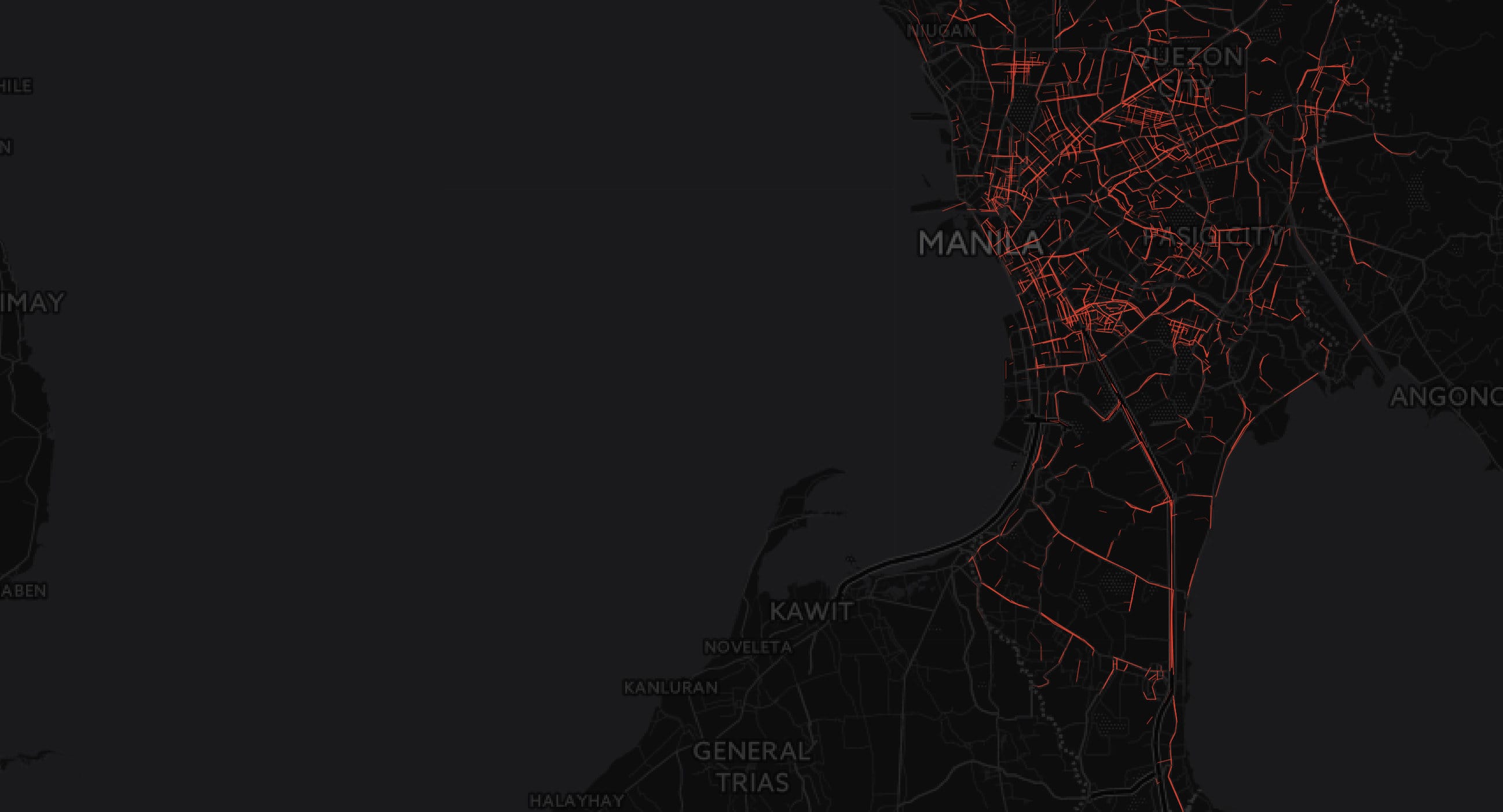

We created a number fraud detection views to align with our client’s Microsoft PowerBI environment. The visualizations allow for fraud analysts to investigate key questions such as:

- Which claims are outliers for a certain set of user-defined filters?

- Where are these outliers located?

- What features did the models tag as most relevant in their outlier recommendation?

Robust Predictions Using Majority Ensemble

- Microsoft Azure

- Microsoft PowerBI

- Isolation Forest

- Principal Component Analysis

- Local Outlier Factor

- HDBSCAN Clustering

- One Class Support Vector Machine

Productionalizing the Concept

The healthcare company now has a tool that cheaply, quickly, and significantly improves fraudulent claims detection. In testing the model across 4 diseases containing an aggregate of over 2.8 million claims, Thinking Machines’ solution was able to recommend 4,562 outlier claims for further investigation by the client’s fraud analysts. Although this comprised only 0.16% of total claims evaluated, the results are promising when viewed through the lens of several millions of claims that are being processed each day by the client.

Our client saved an important amount of time needed to compile a dataset with definitive labels of fraudulent and non-fraudulent claims. This approach will help them to create a gold standard training dataset, advancing from a unsupervised techniques to supervised techniques using verified cases of fraud. Moreover, the visualization dashboards will empower individual fraud analysts to more confidently and effectively handle the day-to-day deluge of healthcare claims.

All in all, the tool has generated valuable momentum for internal champions to push for improving the model and operationalizing the fraud-detection system across the company.